When you say Monitoring, Evaluation, and Learning (MEL) specialist, your mind may go to someone quite nerdy in a pair of glasses, illuminated by the glow of a computer screen practising a boring yet mysterious dark art in an ivory tower – you don’t necessarily think of a young woman gallivanting half-way up a landslide or striding into a room in a cat-print blouse (though you would be spot on with the ‘nerdy’ assumption).

Those with whom I have been working know how passionate I am about shattering the illusion of MEL practitioners, busting jargon, and pulling down the Chinese Wall between MEL and those engaging in day-to-day programming by using more participatory, accessible methods. (Also systems-thinking, but that’s a different blog).

Overly complex terminology does no-one any favours, and often I see M&E methods and frameworks dividing teams and causing confusion and friction, rather than empowering people to use their expertise in the best ways possible. Very rarely will someone leap in excitement at the phrase ‘contribution analysis’, but their ears will perk up if you say ‘let’s figure out how much we can credit this [observed result] to your intervention’. ‘Theory of Change’ conjures an image of linear boxes and arrows. ‘What’s the reasoning behind engaging in these activities given the problem you’re trying to solve’, however, results in a bit of a different conversation.

Perhaps I’m labouring the point here, but hopefully in a way that… makes sense. This is exactly the point and why I no longer use these kinds of terms with my programme teams. While I’ve been talking at conferences about this recently (for example at UKES, link here to a poorly recorded video), and over the years I have been working to really see how far I can push my participatory, MEL-is-for-all approach.

A lot of my work over the years has allowed me to engage with incredible experts and organisations who are leaders in their sector, but may not necessarily feel on the same page as you if you ask them to fill in a LogFrame, do a PEA, or draw up an actor-based systems map. Development language isn’t intuitive and the more I speak to organisations working in development (especially for the first time) the more I realise that us MEL bods are among the absolute worst when it comes to lingo. As someone so interested in making MEL accessible it’s something I have been dedicating a chunk of my time to dispelling.

This issue of language is true in any kind of programme. If mitigating an outbreak, you engage epidemiologists. If mitigating barriers in schooling, you engage educational expertise. If trying to ascertain areas of natural hazards, you might engage geological expertise.

However, all of these experts are experts in their fields (no pun intended for the penultimate example), but that doesn’t necessarily mean they are development experts. When it comes to ODA programming things got a bit more complicated. Having to suddenly draft theories of change, logframes, and monitoring processes as well as more qualitative reporting is a new set of skills that comes with a new vocabulary. Hours get spent trying to determine an ‘output’ from an ‘outcome’, as well as try to explain the impact that their small, targeted pieces of work could have on a country or a system… without ‘impact’ being explained or defined as a concept.

Needless to say this ends up being a bit much. In my experience I find that often this means that MEL ends up not being something that experts and organisations delivering this kind of targeted support feel kindly towards and programmes end up in that age-old situation of bean-counting and sometimes programmes end up trying to deliver an impact that was impossibly out of scope.

Often teams end up led astray by traditional development language and get muddled about what they could reasonably cause or measure. Let us take an example. This is purely fictitious and does not represent any actual people, programmes, or realities.

Let us say that a team is trying to address the problem of poor economic prospects in mineral-rich regions in Beraria. As with all complex problems, this was due to a variety of factors. Historically mineral wealth/natural resource maps had not acknowledged the minerals, and test data was not stored in country. Further to this, the mineral wealth of the authorities was never communicated to them and so private sector companies had instead capitalised. In this scenario, this is partly because the map had been hand drawn by a variety of international geologists some time ago, such that there were pieces of maps in files… but not done in the same style nor to the same scale. They were inaccurate, outdated, and didn’t join up into something coherent. Further to this, by airdropping into the country, doing the work and testing, then leaving, capacity wasn’t transferred and the work sat on shelves like old potatoes. The capacity problem was exacerbated by the lack of further education on geology. This mixes together into a perfect storm where private sector companies could mine to their heart’s content while the local and central government has no grasp of its mineral wealth, thereby missing economic opportunities.

There is where a programme can come in. A workstream could look at building local skills to develop and maintain geological skills, and another to work with local authorities to support policies to protect natural resources. To build these skills, the programme would need expertise in natural resources: experts who understand how to build this capacity to identify and protect natural resource wealth. Experts who breathe this day in, day out.

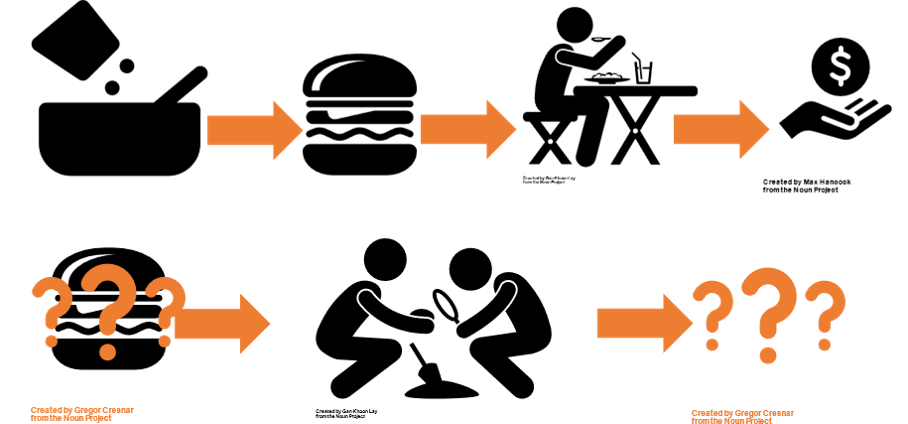

However, what often happens with these dynamic interventions led by consortiums of specialists and experts is that this doesn’t always get well-represented. What can happen is that a complex intervention ends up with a theory of change a little like the below that fails to capture what is actually changing.

This is because the whole traditional model and the ‘input’, ‘output’, ‘outcome’, ‘impact’ language just doesn’t describe change (see here for more on that). When you are making and selling hamburgers, fine, you can state you output hamburgers and have an outcome of people buying them to have an impact of profit. It’s a simple situation and only needs a simple explanation. When you are trying to train budding natural resource specialists or geologists and support their development so that they can build and grow their natural resource map map and develop passion so that they stay in their roles (amongst other things), you can’t. The words simply don’t make sense and don’t translate into most languages.

Just as natural resource maps are inaccurate because everyone was doing different things, it’s the same with MEL. Make it accessible. Using better language that doesn’t subscribe to the ego-stroking of technical terminology gets the best out of everyone concerned. If I ask stakeholders what they are hoping to output they often find it challenging and may speak about policy or training approaches. When I ask them what services they are providing it is easy: on-the-job training, digital data training, coaching… you name it.

If I were to ask this fictitious team about outcomes and impacts the response would probably be something around having a natural resource protection policy and increasing mineral wealth in the entire country. This misses the behavioural element that everything hinges on and results in a decision to affect that which is far out of reach of this local programme. Instead, worshipping at the alter of John Mayne and asking teams what behaviour changes they need to see and what that translates into in terms of sustainable change results in a different answer. This leads to quite an interpretable change pathway a little like the below. This is linear and rudimentary (the real demonstration of complexity would need an additional layer and some feedback loops, and more investigation of potential impact), but it still demonstrates the power of using plain language and how this can explain an actual change process.

This isn’t rocket science… this is making complexity accessible. Doing away with some of the linguistic barriers has allowed me to really support this team to demonstrate what they actually do. As I once had said to me “When I heard the kinds of questions you were asking I really understood what MEL encompasses… Hearing what they said made me realise how far we’ve come – I get so hung up on the binary… that I miss the bigger picture”.

This is why we need to use better language. Old methods just do not work and we need to make things accessible to the teams who are delivering. If we can’t help them explain what they’re achieving, we cannot capture and evaluate it. Everything can be translated – from landslides to health system reform. Some landslide specialists I once worked with proved this point to me: despite being leading world experts, they had a wonderful analogy involving a jam sandwich to explain how some landslides occur. I could understand this and see what the process was. However, when one cracked the joke of “Oh, well, you know how it is. One person’s granite is another person’s monzodiorite” I was somewhat less on-board, though the other specialists liked it. MEL should do the same. Save the technical language for other evaluators.

I’m not alone in thinking this. One time I had an interesting moment that supported this line of thinking. Half-way up a landslide the specialist I was speaking to (not someone I worked with) learned I was a MEL adviser and called over a member of her staff, who was their MEL adviser. We had a great time. He shared my views about complex language – he wasn’t inducted into MEL by a flashy consultancy and instead had learned it on-the-go. As such he had a wealth of experience of breaking things down, and shared some of the ways he was making MEL accessible in his work with the community. We’re still in touch now and he’s been a frequent source of wisdom.

While we were giggled at slights for being lost in nerdy conversations about programme theory and behaviour change mapping whilst scrambling over rocks (see top of this post), the point still stands: we understood one another better without jargon.

As a stakeholder once said to me “It’s the way that YOU describe it that I think makes it interesting”… Which perplexed me, and by no means is meant to be any sort of narcissistic tooting of my own horn; complex MEL is often trying to access the same concepts across the board in comparably layered ways (unless you’re desperately trying to use purely stats to interpret complex shifts in behaviour, in which case go hang your head in shame!). It was a genuine shame that I was the only person using accessible language and engaging examples in his experience.

And this is why I’m passionate about this point. Time after time after time again, concepts and language like ‘outputs’ are nebulous and inaccessible. I have never yet had accessible language and amusing analogies do me wrong. Let’s do away with the jargon and empower our programme delivery teams to climb into the MEL process.